Best Self-Hosted Wardrobe Apps in 2026

Your wardrobe says a lot about you. What you own, what you wear most, how you dress for different occasions — this is personal data in the most literal sense. And yet, most wardrobe apps ask you to upload photos of every item you own to someone else's server, tagged with metadata about your body, your lifestyle, and your daily habits.

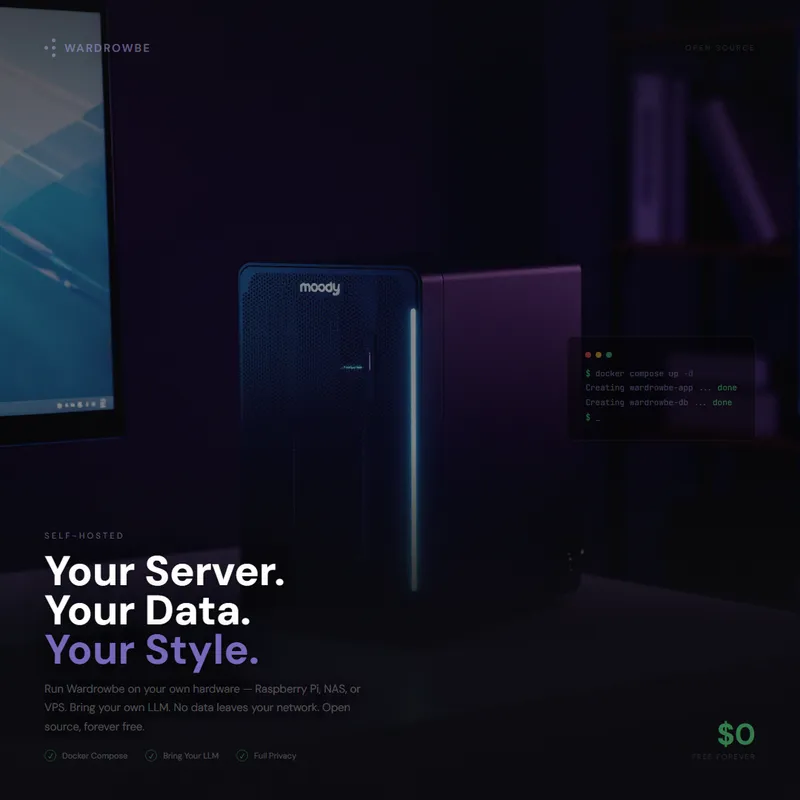

If that makes you uncomfortable, self-hosting is the answer. A self-hosted wardrobe app runs on hardware you control — a Raspberry Pi in your closet, a NAS you already own, or a cheap VPS. Your clothing photos and style preferences never leave your network unless you explicitly choose otherwise.

The catch? There aren't many options. Wardrobe management is a niche within a niche. This guide covers what's available, what works, and how to get started with a self-hosted wardrobe app that respects your privacy.

Why Self-Host a Wardrobe App

The reasons break down into three categories, and they're the same reasons people self-host anything from password managers to photo libraries.

Privacy

A cloud wardrobe service stores photos of your clothes, metadata about your body type, your daily outfit choices, and your location-based weather data. That's a detailed personal profile. With a self-hosted wardrobe app, this data lives on your hardware. Even if the app uses AI for tagging and suggestions, you can run the AI models locally too — meaning your clothing data never touches an external API.

Control

Cloud services shut down. They change pricing. They pivot to a different market. When the service you've spent months digitizing your wardrobe into decides to sunset, you're left with nothing — or an export file in some proprietary format. Self-hosted software doesn't disappear. You control the database, the backups, and the upgrade schedule.

Cost

Most wardrobe apps charge a monthly subscription. The numbers seem small — $5 to $10 per month — but they add up. A Raspberry Pi costs $60 once. A VPS costs $4/month and can run your wardrobe app alongside a dozen other self-hosted services. The economics of self-hosting improve dramatically when you're already running a home server for other purposes.

Self-hosting isn't for everyone. If you want zero infrastructure and don't mind cloud storage, a managed service is simpler. But if you already run Docker containers at home, adding a wardrobe app is trivial.

What to Look for in a Self-Hosted Wardrobe App

Not all wardrobe software is designed for self-hosting. Some "open source" projects are just a frontend that still requires a proprietary backend. Others are truly self-contained but lack basic features. Here's what matters:

| Feature | Why It Matters |

|---|---|

| Docker Compose deployment | One command to start everything — app, database, worker processes |

| Local AI support | Image tagging and outfit suggestions without sending data to external APIs |

| Mobile app | You need to photograph clothes and log outfits on the go, not just at a desktop |

| Automatic tagging | Manual tagging kills adoption — AI should detect type, color, style, and formality |

| Outfit suggestions | The app should actively suggest what to wear, not just be a passive catalog |

| Weather integration | Suggestions should account for today's weather conditions |

| Multi-user support | Households share a server — each person should have their own wardrobe |

| Active development | Abandoned projects mean abandoned bug fixes and security patches |

The first two items are non-negotiable. If you can't deploy with Docker and can't run AI locally, the self-hosting value proposition collapses — you end up maintaining infrastructure while still sending data to the cloud.

Comparing Your Options

Let's be honest: the self-hosted wardrobe app space is thin. Fashion tech companies target mobile-first cloud users, not the self-hosting crowd. Here's what actually exists:

| App | Type | AI Tagging | Outfit Suggestions | Mobile App | Docker Support | Multi-User | Active Development |

|---|---|---|---|---|---|---|---|

| Wardrowbe | Dedicated wardrobe app | Yes (local via Ollama or cloud API) | Yes (weather + occasion + learning) | Yes (Expo/React Native) | Yes (Docker Compose) | Yes (family groups) | Yes |

| OpenWardrobe | Closet organizer | No | No | No (web only) | Community Docker image | No | Minimal |

| Grocy (clothing module) | Inventory manager with clothing add-on | No | No | Third-party apps | Yes | Yes | Yes (but clothing is secondary) |

| Monica HQ | Personal CRM with appearance tracking | No | No | No (web only) | Yes | No | Maintenance mode |

Wardrowbe

The only purpose-built, open-source wardrobe management app with AI integration. Vision models automatically tag clothing items when you photograph them — detecting type, color, pattern, style, and formality level. The outfit suggestion engine factors in weather, occasion, your learned preferences, and item pairing compatibility. It ships with a full mobile app (iOS and Android) alongside the web dashboard, and supports family groups where household members can share outfit ratings without sharing closets.

The AI layer works with any OpenAI-compatible API. Run Ollama locally for complete privacy, point it at a self-hosted LLM, or use an external API if you prefer accuracy over isolation. The choice is yours and it's a runtime configuration, not a code change.

OpenWardrobe

A straightforward closet cataloging tool. You manually add items with photos and tags, then browse your wardrobe by category. No AI, no outfit suggestions, no weather integration. It solves the "I forgot what I own" problem but nothing beyond that. Development has been slow, with occasional community contributions. If you just want a visual inventory of your clothes and nothing more, it works. For anything beyond cataloging, you'll outgrow it quickly.

Grocy (Clothing Module)

Grocy is a groceries and household management system that includes a generic inventory module. Some users repurpose this for clothing tracking by creating custom fields for garment type, color, and size. It works as a basic inventory database, but it was never designed for wardrobe management. There's no concept of outfits, no weather integration, no AI, and no pairing logic. Grocy is an excellent tool for what it's built for — just don't expect it to replace a dedicated wardrobe app.

Monica HQ

Monica is a personal relationship management tool that includes fields for tracking physical appearance and clothing preferences of your contacts. It's a stretch to call it a wardrobe app, but it occasionally appears in self-hosted wardrobe discussions. Monica went into maintenance mode in late 2024, and the clothing-related features were always peripheral. Not recommended for wardrobe management.

How Wardrowbe Works for Self-Hosting

Since Wardrowbe is the only option with full-stack wardrobe features and Docker support, it's worth walking through the setup.

Architecture

The self-hosted stack consists of four containers:

- Backend — FastAPI (Python) handling the API, item management, and outfit logic

- Frontend — Next.js web dashboard for desktop wardrobe management

- Worker — Background job processor for AI image tagging and notifications

- PostgreSQL — Database storing items, outfits, preferences, and learning data

An optional fifth component is your LLM provider. Ollama runs as a separate container (or on the host) and handles both vision (image tagging) and text (outfit generation) tasks.

Docker Compose Setup

The deployment is a single docker-compose.yml:

git clone https://github.com/Anyesh/wardrowbe.git

cd wardrowbe

cp .env.example .env

# Edit .env with your settings (domain, LLM endpoint, etc.)

docker compose up -d

docker compose exec backend alembic upgrade headThat's the entire process. The first startup pulls images and initializes the database. Subsequent starts take seconds.

LLM Integration

Wardrowbe talks to any OpenAI-compatible API for two tasks:

- Vision — analyzing clothing photos to extract type, color, pattern, style, and formality

- Text — generating outfit suggestions based on wardrobe contents, weather, occasion, and learned preferences

For a fully self-contained setup, run Ollama alongside Wardrowbe and configure the LLM endpoint in your .env file. Models like LLaVA handle the vision tasks, while Llama or Mistral handle text generation. If you have a GPU, these run fast. On CPU-only hardware like a Raspberry Pi, expect slower tagging — but it's a background job, so you won't be waiting for it.

Alternatively, point Wardrowbe at any external OpenAI-compatible API. The backend doesn't care where the AI lives, only that it speaks the right protocol.

AI Features in Detail

The AI does more than tag images. Once your wardrobe is digitized, the system builds a comprehensive model of your clothing and your preferences:

- Automatic tagging — photograph an item, and vision AI extracts all metadata automatically

- Outfit suggestions — daily suggestions considering weather, occasion, and what you've worn recently

- Style learning — the engine tracks your feedback (accepts, skips, ratings) and adjusts future suggestions

- Smart pairings — select any item and see complete outfits built around it

- Gap analysis — identifies missing pieces that would unlock the most new outfit combinations, useful when building a capsule wardrobe

- Weather matching — integrates with Open-Meteo (free, no API key) to factor in temperature, rain, and wind

All of these features work identically in the self-hosted version and the cloud version. There's no feature gating — self-hosting gets the full product.

Hardware Requirements

One of the most common questions about any self-hosted app: what hardware do I need? Here's a breakdown by deployment target:

| Hardware | CPU | RAM | Storage | AI Performance | Cost |

|---|---|---|---|---|---|

| Raspberry Pi 4/5 | 4-core ARM | 4-8 GB | 32 GB+ SD/SSD | Slow (CPU-only, small models) | $60-$100 |

| Old laptop / mini PC | 4+ core x86 | 8-16 GB | 128 GB+ SSD | Moderate (CPU, medium models) | $0-$200 |

| NAS (Synology/QNAP) | Varies | 4-8 GB | NAS storage | Slow-moderate (depends on model) | Already owned |

| VPS (Hetzner/Oracle) | 2-4 vCPU | 4-8 GB | 40 GB+ SSD | Moderate (CPU only, but fast cores) | $4-$10/month |

| Desktop with GPU | Any modern | 16+ GB | SSD | Fast (GPU-accelerated Ollama) | Already owned |

Minimum Requirements

Wardrowbe itself is lightweight. The backend, frontend, worker, and database together use roughly 1 GB of RAM at idle. Storage depends on how many clothing items you photograph — budget around 2 MB per item, so a 200-item wardrobe uses about 400 MB.

The AI component is what drives hardware requirements up. If you're running Ollama locally:

- 7B parameter models (LLaVA 7B, Mistral 7B) — need 4-6 GB RAM, run acceptably on most hardware

- 13B+ parameter models — need 10+ GB RAM, benefit significantly from a GPU

- Vision tasks — slower than text generation, especially on CPU

The Practical Approach

If you already have a home server or NAS, run Wardrowbe there and point it at an external AI API initially. This gets you up and running in minutes. Once you're comfortable with the app, experiment with local AI. You can switch between local and cloud AI at any time by changing a single environment variable — no data migration, no reconfiguration.

For dedicated hardware, a Raspberry Pi 5 with 8 GB RAM handles the app stack comfortably. AI tagging will be slow on the Pi (expect 30-60 seconds per image with a small model), but since tagging happens in the background, you photograph an item and move on. The tagged result appears a minute later.

For the fastest self-hosted experience, a machine with even a modest GPU (something like a GTX 1060 or newer) running Ollama will tag items in under 5 seconds and generate outfit suggestions nearly instantly.

VPS as a Middle Ground

A VPS isn't technically "self-hosted" in the purest sense — you're running on someone else's hardware. But you control the software, the data, and the encryption. For many people, a $5/month VPS from Hetzner or Oracle's free tier strikes the right balance between privacy and convenience. You get a fast, always-on server without managing physical hardware.

If you want true data sovereignty, pair a VPS with encrypted storage and your own backup strategy. Your wardrobe data stays encrypted at rest and in transit, and you hold the keys.

Self-Hosted vs. Cloud: Choosing Your Path

Wardrowbe offers both options, and the feature set is identical. The differences are operational:

| Self-Hosted | Cloud | |

|---|---|---|

| Data location | Your hardware | Wardrowbe servers |

| AI model choice | Any OpenAI-compatible API or local | Managed (high-accuracy models) |

| Maintenance | You handle updates and backups | Automatic |

| Uptime | Depends on your infrastructure | 99.9%+ |

| Cost | Free (you provide hardware) | Subscription plans |

| Setup time | ~5 minutes with Docker | Instant (sign up and go) |

| Mobile app | Same app, pointed at your server | Same app, pointed at cloud |

Neither option is universally better. If you value privacy and control above all else, self-host. If you want a service that just works without thinking about Docker, backups, or server uptime, the cloud version delivers that. Some users even run both — self-hosted at home for daily use, cloud for travel when their home server might be unreachable.

For common questions about either approach, check the FAQ.

Getting Started

- Self-host Wardrowbe with Docker Compose — free forever, ~5 minutes setup

- Or start a free trial of the cloud version if you prefer zero infrastructure

Explore all features or check the FAQ for common questions.